RealBuddy: We Built an AI Buddy That Listens In on Conversations and Joins When It Has Something to Say

My colleagues and I regularly have discussions about AI — usually emotional, often based on half-knowledge, and that's exactly what makes them good. But at some point the question came up: What if someone could sit at the table who could, at least theoretically, fact-check everything?

Then there was a second thought that had been on my mind for a while: Why do we always use AI passively? We trigger, AI responds. Question-answer, question-answer. Autonomous AI — one that decides on its own when to chime in — always seemed more interesting to me. So we built RealBuddy.

What RealBuddy is

RealBuddy is not a voice assistant. No "Hey Siri", no commands, no question-answer mechanics. It's a passive listener — an AI buddy that listens in on conversations in the room and chimes in on its own when it has something useful to contribute. Like a friend sitting at the table who occasionally adds something.

The difference to traditional assistants: Alexa or Siri react to commands and deliver one answer to one question. RealBuddy follows the entire conversation, recognizes context, topics, and mood — and decides for itself whether and when to say something.

The decision system: When is it allowed to speak?

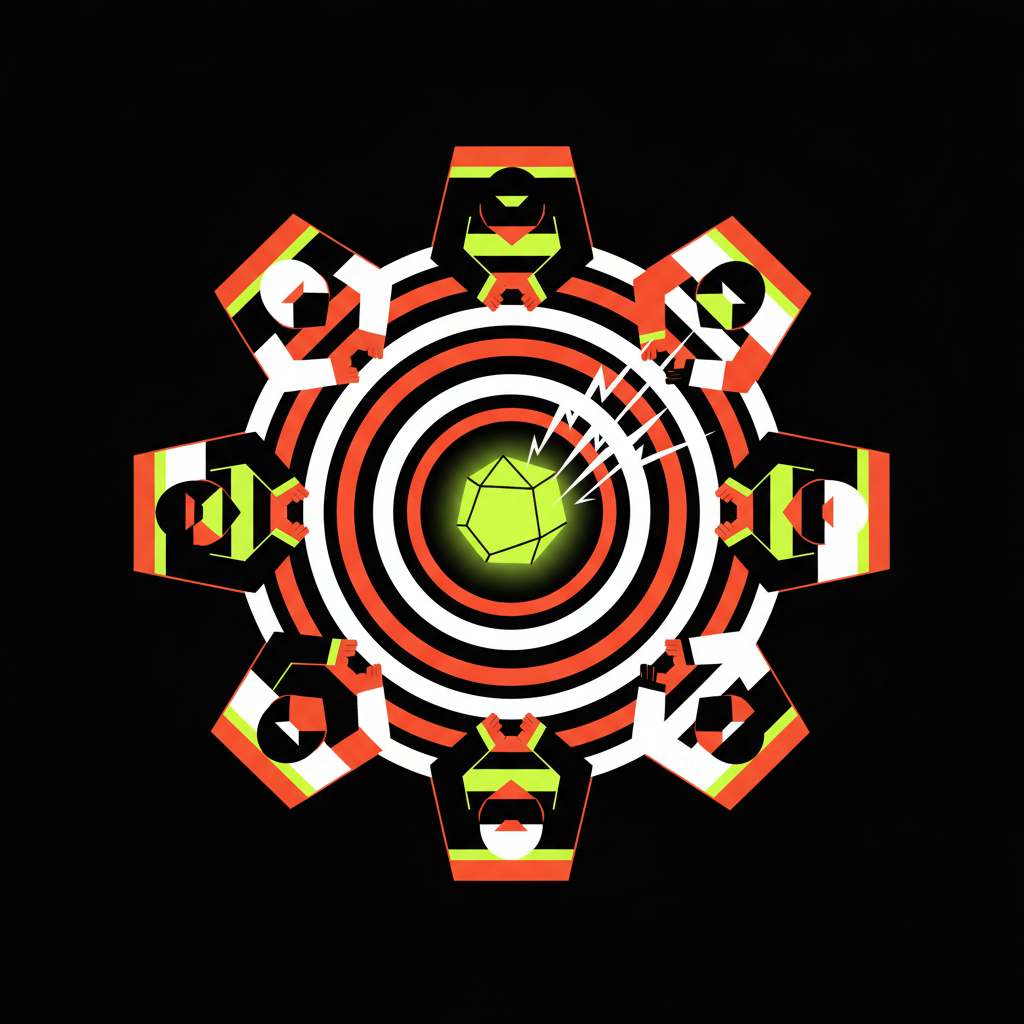

The most interesting part of RealBuddy isn't the speech recognition or answer generation — it's the decision whether to open its mouth at all. For this, we built a multi-stage gate system:

First it checks relevance: Is this a topic where it can add value? Then priority: Wrong facts in the conversation have high urgency, small talk less so. Then timing: Is there a natural pause? Is someone talking? And finally confidence: Are all factors combined high enough to chime in?

On top of that, there's a KPI tracker per topic with EMA smoothing so nothing reacts abruptly. An organic warmup after speaking, staleness decay for old topics, and a repetition penalty so it doesn't repeat itself. Plus a hard cooldown of 60 seconds between interventions.

The goal was: It should feel like an attentive listener, not an overeager know-it-all.

The technical stack

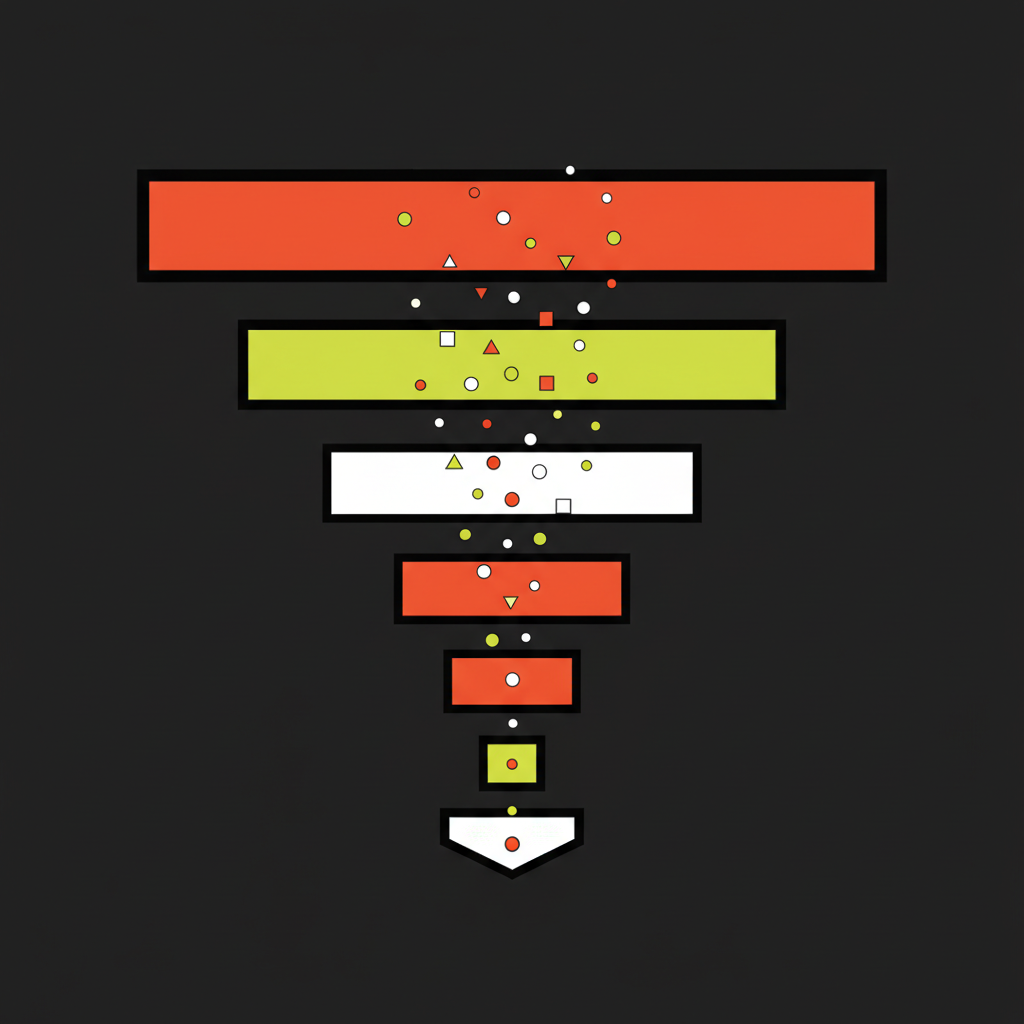

The audio pipeline: Silero VAD detects speech in the audio stream, Groq Whisper transcribes in 10-second chunks — not quite real time, but extremely fast, the LLM analyzes context and formulates answers, ElevenLabs turns them into speech.

As LLM we use Groq — a larger model for answers, a smaller one for quick analysis of whether to react at all. Plus a dual RAG system with ChromaDB: one collection for conversations (what was said), one for background research (facts that a background researcher automatically looks up on the web while we talk).

Audio comes through the browser — AudioWorklet API streams 16kHz PCM via WebSocket to the server, TTS audio comes back as binary WebSocket. The whole thing runs in a Docker container with a browser dashboard, also usable from a phone.

From tray app to configurable buddy

RealBuddy went through several iterations. Started as a local macOS tray app with a single buddy and fixed character. Then a phase with four simultaneously active personalities — an 80s buddy, a dry sarcastic type, a warm-hearted one, and an East German politician. Each with their own voice, own trigger behavior, own topic affinities. An arbitration system decided who got to speak.

In the end we simplified: one configurable default persona instead of four fixed ones. In the dashboard you can adjust the character prompt, choose from all ElevenLabs voices, and use a slider to set how talkative it should be. Answers are deliberately kept short — 1-2 sentences, maximum 80 tokens.

What came out of it

Development was iterative and conversation-driven. We tested, gave feedback, adjusted immediately. "Responds too quickly" — cooldown up. "Too long" — token limit down. "No empathy" — built emotional_need into the LLM analysis, with a fast track for sadness or frustration.

Three fast tracks proved particularly useful: fact correction (when someone is demonstrably wrong), empathy (when the mood shifts), and pending decisions (when a question hangs in the air that nobody answers).

The honest assessment: RealBuddy is an experiment, not a finished product. Sometimes it hits the perfect moment and drops exactly the right fact. Sometimes its timing is off or it comments on something that's long been discussed. But as a prototype it shows that autonomous AI interaction is possible — and feels fundamentally different from question-answer.

Conclusion

The question that led us to RealBuddy was actually simple: What happens when AI doesn't wait to be asked? The result is a system of about 15 Python modules that listens, thinks along, researches in the background, and chimes in when it believes it can contribute something.

Whether this is the future of AI interaction, I don't know. But it feels closer to how we interact with humans than anything I've experienced with voice assistants before. And it definitely made our table discussions more interesting — even though RealBuddy sometimes gets it wrong. But so do we.