How I Made an AI Model Faster on My MacBook — And What Went Wrong

LLM models are massive. But not every neuron works on every word. I tried to find the lazy neurons and remove them — with custom Metal kernels, predictor networks, and a pair of scissors.

I've been working on an experiment over the past few days: Can you make a large language model faster directly on your own machine — no cloud, no special GPU, just what a MacBook has?

The short answer: Yes. From 70 to 88 tokens per second, with 21% less memory usage. But the path there was anything but straightforward. Three out of four approaches failed — and that's exactly what makes this story interesting.

What the Problem Is

Large language models like GPT, Llama, or Qwen consist of billions of parameters. When running them locally, for example on a MacBook with an M1 chip, two things are scarce: memory and computing power.

A 7-billion parameter model in compressed form (4-bit quantization) takes up about 4.2 gigabytes. That fits in the memory of an M1 Max with 64 GB — but speed is limited. The standard is about 70 tokens per second. Usable, but not fast.

The question was: can we get more out of it?

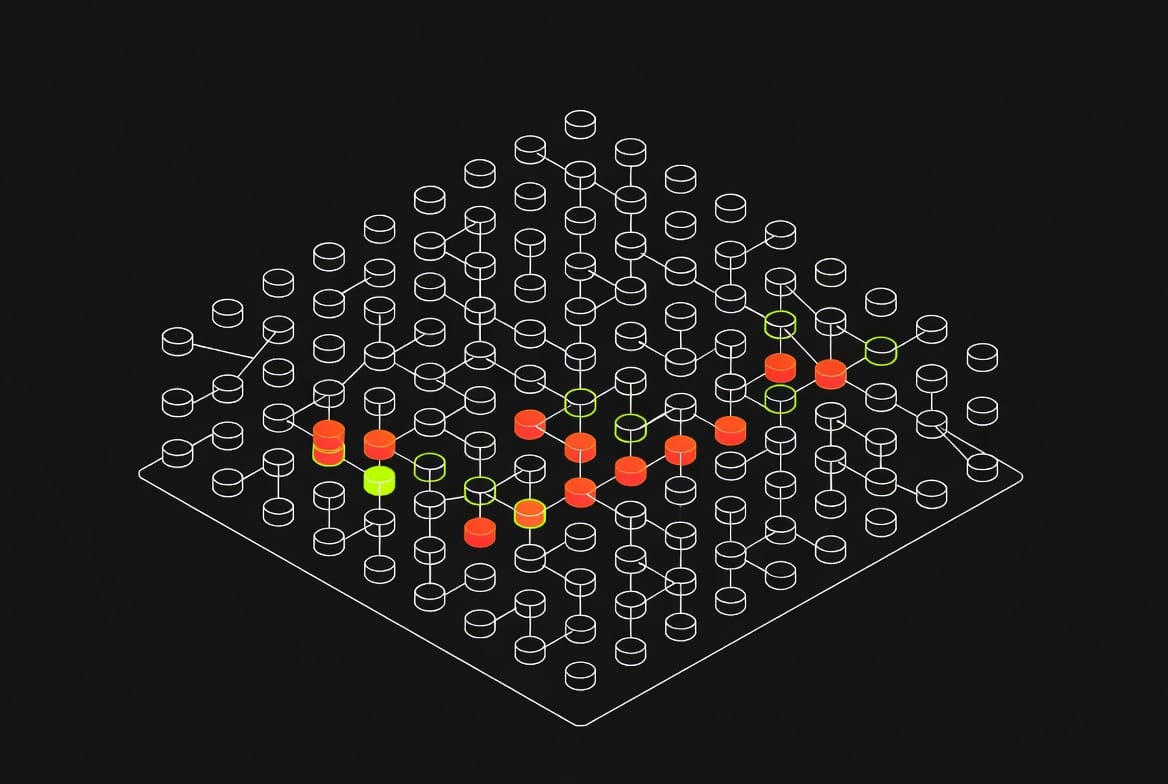

The Core Idea: Finding Lazy Neurons

To understand what I tried, an analogy helps. Think of a language model as an open-plan office with thousands of employees. With every request — every word the model generates — theoretically everyone is at their desk. In practice, only a fraction actually contributes anything meaningful. Most sit there producing values near zero.

The goal: identify the lazy employees and either skip them (sparsity) or fire them permanently (pruning). Both save computing time.

Attempt 1: Skipping Neurons at Runtime

The first approach sounds elegant: while the model runs, we check at each step which neurons are currently inactive and skip their computation.

For this, I wrote custom compute routines — Metal kernels that run directly on the Mac's GPU chip. The technical implementation was complex. There was a critical bug where the code only computed 14 of 3,584 neurons and the rest was silently zero. The results looked plausible but were garbage. These bugs are insidious because the model still generates text — just worse text.

After the fix it worked correctly, but the result was sobering: ~70 tokens per second — virtually no speedup. The problem: each skip operation has to be dispatched individually to the GPU chip. The overhead of managing the skipping was as large as what you save.

Imagine the office manager running through the open-plan office checking every single desk to see if the employee is currently doing something useful. The checking takes as long as the work itself.

Attempt 2: Predicting Who Will Be Lazy

If checking at runtime is too slow — what if we could predict which neurons will be inactive before we even compute them?

For this, I trained a small helper network for each layer in the model. These predictor networks are tiny — a few hundred thousand parameters versus billions in the main model. They look at the current state and say: "In this layer, neuron groups 5, 12, and 47 will be active, you can skip the rest."

The predictors achieved an average 89% accuracy. Sounds good. But the result was again sobering: 71.1 tok/s — barely faster than the 70 tok/s baseline. Same overhead problem. Whether you measure or predict the lazy neurons — the back-and-forth between control logic and GPU chip eats the gain.

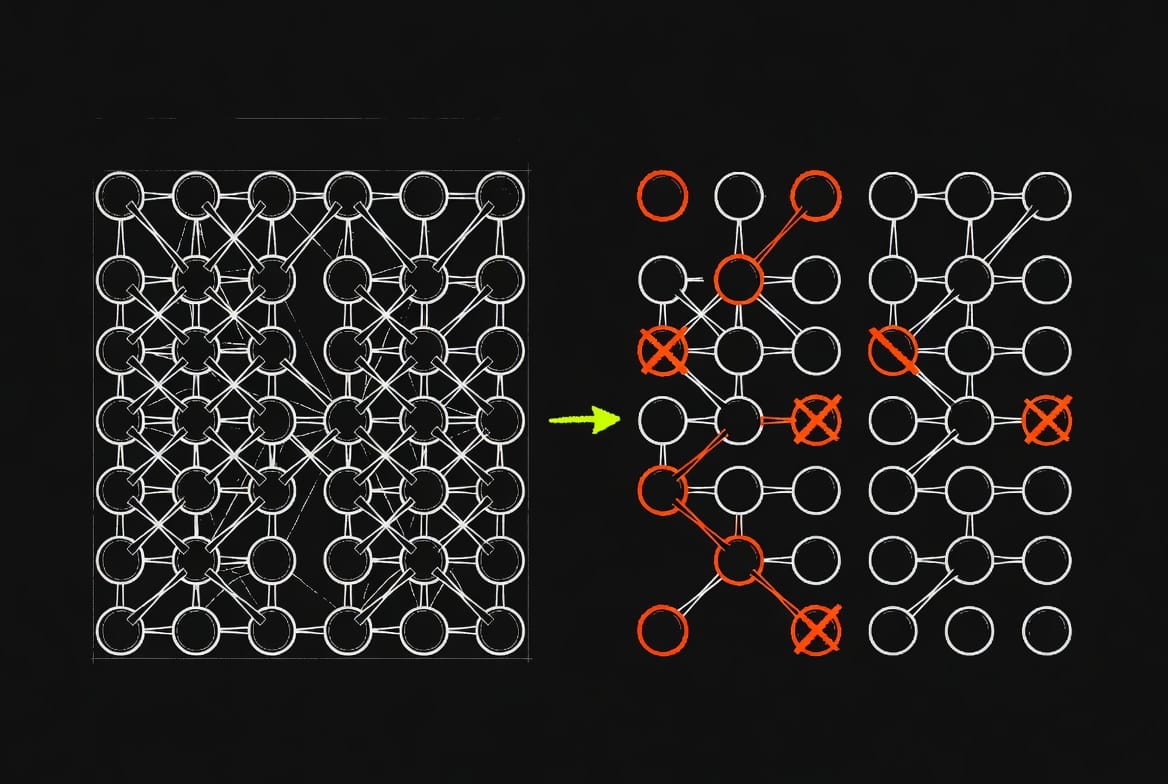

Attempt 3: Permanently Removing Neurons

After two failed approaches, a change in perspective: instead of skipping neurons at runtime, simply permanently remove them from the model.

It's like restructuring the open-plan office: instead of checking every day who's not working, you let the permanently inactive ones go and have a smaller but faster office afterward. No more management overhead.

The implementation: I fed 20 different texts through the model — English, German, Spanish, code, mathematics — and measured which neuron groups consistently contributed little. These groups are then permanently cut from the weight matrices. The result is a smaller model that runs normally with standard routines.

And here it got interesting:

| Variant | Size | Speed | Speedup |

|---|---|---|---|

| Original | 4.2 GB | 69.8 tok/s | — |

| 20% removed | 3.6 GB | 79.9 tok/s | +16% |

| 30% removed | 3.3 GB | 87.9 tok/s | +28% |

30% of neurons removed — and the model becomes 28% faster with 21% less memory usage. Text quality noticeably decreases, but for many tasks the model remains usable.

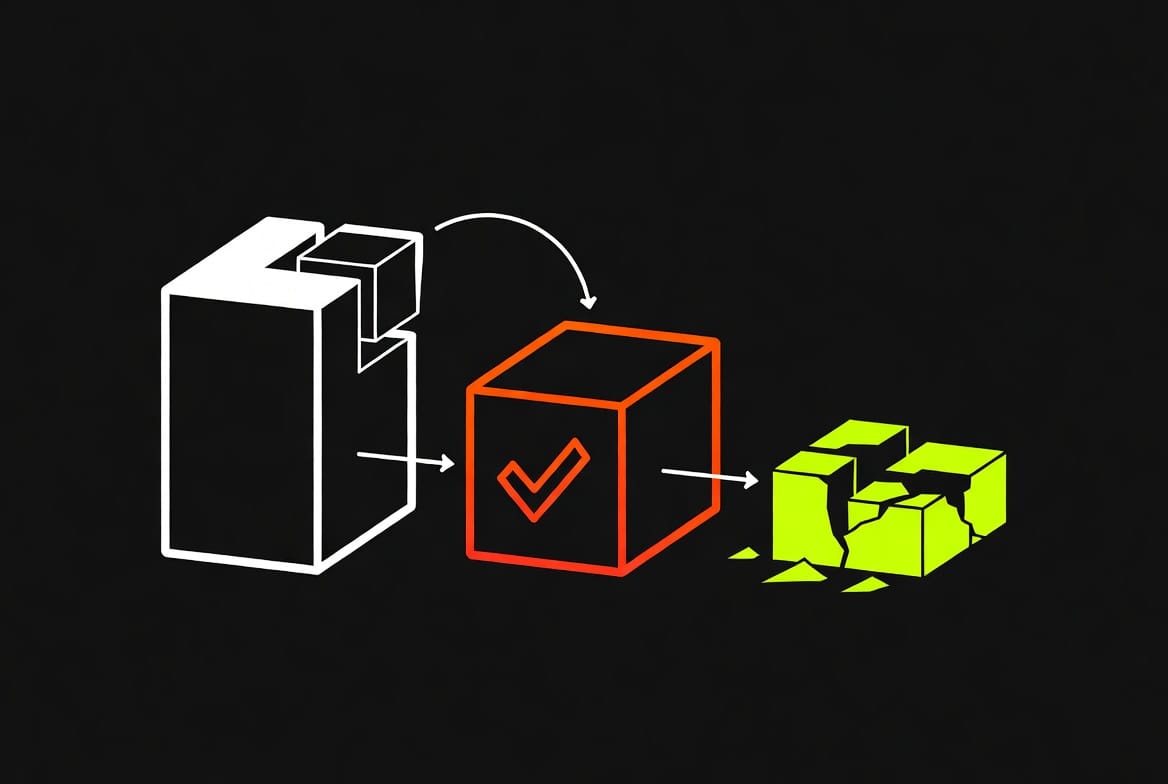

Attempt 4: Even Smaller Through More Aggressive Compression

Motivated by the pruning success, I wanted to go further: don't remove the less important neurons, but compress them more aggressively. Instead of 4-bit precision, just 2-bit — that halves the memory again.

The result: Total failure. The model produced nothing but word garbage.

The reason is logical in hindsight: the model was already compressed from 16-bit to 4-bit. Going from these already compressed 4-bit values down to 2-bit is like re-compressing a JPEG image as JPEG again — each step loses disproportionately more information. For meaningful 2-bit quantization, you'd need to start from the uncompressed original.

What I Learned

1. Permanently smaller beats dynamically saving. On Apple hardware with the MLX framework, it's more efficient to use a permanently smaller model than to cleverly skip computations at runtime. The overhead of being clever eats the gain.

2. Not all neurons are equally important — but it's complicated. Simply measuring which neurons produce large values is a weak indicator. Research has better methods that measure the actual impact on the output. I haven't implemented those yet — that would be the next step.

3. Features are distributed across many neurons. This is the so-called superposition problem: a single concept isn't stored in a single neuron but distributed across many. When you remove neurons, the effects can be unpredictable — a neuron that looks harmless might be involved in ten different capabilities.

4. The compression chain has limits. From 16-bit to 4-bit works well. From 4-bit to 2-bit is a dead end. Each compression step must start from the best possible source material.

What Could Come Next

The biggest lever would be a better method for measuring neuron importance. Instead of just looking at how large their activation is, you could measure how much the final result changes when you remove them. That requires gradients — tracing back which neuron contributed how much to the final output.

Also: not every layer in the model has the same amount of redundancy. An adaptive system that finds the optimal pruning rate per layer could significantly improve quality at the same speedup.

And long-term, the combination of pruning and retraining (knowledge distillation) would be the most promising path: first prune, then have the smaller model learn from the original model as teacher. That could partially recover the quality losses from pruning.